VR Shared - Meta Avatarsの統合

概要

このサンプルは、共有トポロジーを使って、MetaアバターをFusionアプリケーションに統合する方法を説明します。

主な内容は、以下の通りです。

- Fusionのネットワーク変数を使って、アバターを同期させる。

- Fusion Voiceとリップシンクを統合する。

技術情報

このサンプルは Shared Mode トポロジーを使用しています。

このプロジェクトは .NET Framework で開発されています。

- Unity 2021.3.7f1

- Oculus XR Plugin 3.0.2

- Meta Avatars SDK 18.0

- Photon Fusion 1.1.3f 599

- Photon Voice 2.50

始める前に

サンプルを実行するには :

PhotonEngine Dashboard](@WebDashboardBaseUrl) で Fusion AppId を作成し、Real Time Settings (Fusion メニュー) の

App Id Fusion欄に貼り付けます。PhotonEngine DashboardでVoice AppIdを作成し、リアルタイム設定の

App Id Voice欄に貼り付けます。次に、

ScenesOculusDemoシーンを読み込んで、Playを押してください。

ダウンロード

| バージョン | リリース日 | ダウンロード | ||

|---|---|---|---|---|

| 1.1.3 | May 05, 2023 | Fusion VR Shared Meta Avatar Integration 1.1.3 Build 191 | ||

入力処理

メタクエスト

- テレポート : A、B、X、Y、またはいずれかのスティックを押してポインターを表示します。ポインタを放すと、受け付けたターゲットにテレポートします。

- 掴む: まず、手をオブジェクトの上に置き、コントローラのgrabボタンで掴みます。

本サンプルは、Metaハンドトラッキングに対応しています。このため、ピンチ操作でテレポートやオブジェクトの掴み取りができます。

フォルダ構成

/Oculus フォルダには、Meta SDK が含まれています。

/Photon フォルダには、Fusion と Photon Voice SDK が含まれています。

/Photon/FusionXRShared フォルダには、VR共有サンプルからのリグとグラブロジックが含まれており、他のプロジェクトと共有できるFusionXRShared light SDKが作成されています。

/Photon/FusionXRShared/Extensionsフォルダには、FusionXRSharedの拡張機能が入っており、同期光線、ロコモーション検証などの再利用可能な機能があります。

/Photon/FusionXRShared/Extensions/OculusIntegration フォルダには、Meta SDK統合のために開発されたプレハブやスクリプトが含まれています。

/StreamingAssets フォルダには、プリビルドされた Meta アバターが含まれています。

/XR フォルダには、バーチャルリアリティ用の設定ファイルが含まれています。

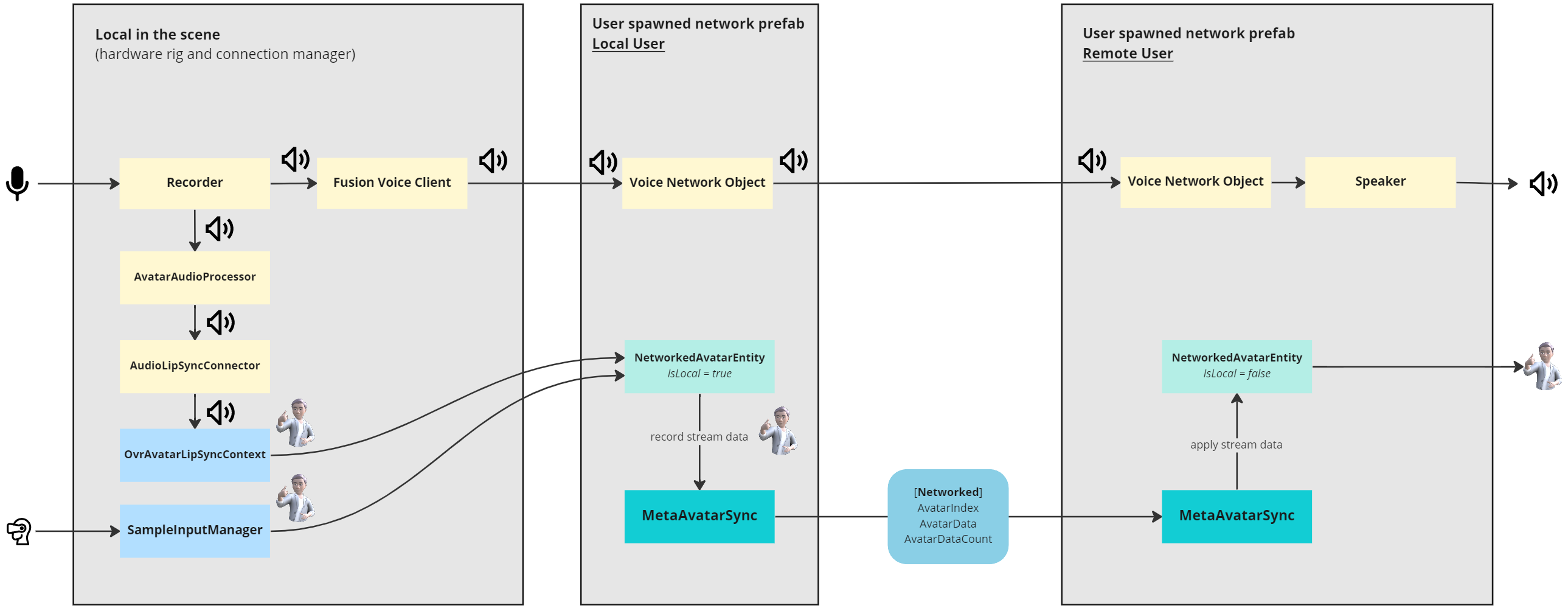

アーキテクチャの概要

リグのセットアップ方法など、本サンプルに関する詳細は、まずVR Sharedのメインページをご覧ください。

シーンセットアップ

OVRハードウェアリグ

このシーンには、Oculus SDK と互換性のあるハードウェアリグが含まれています。サブオブジェクト MetaAvatar には、Meta アバターに関するすべてのものが含まれています。

LipSyncにはOVRAvatarLipSyncContextコンポーネントがあります:これは Oculus SDK が提供するリップシンク機能を設定するためのものです。BodyTrackingにはSampleInputManagerコンポーネントがあります:これは Oculus SDK (Asset/Avatar2/Example/Common/Scripts) から来るものです。これはOvrAvatarInputManagerベースクラスから派生したクラスで、アバターエンティティにトラッキング入力を設定するためにOVR Hardware Rigを参照します。AvatarManagerはOVRAvatarManagerコンポーネントを持っており、Meta アバターを読み込むために使用されます。

接続マネージャー

ConnectionManager は、Photon Fusion サーバーへの接続を処理し、OnPlayerJoined コールバックが呼び出されると、ユーザー ネットワーク プレハブを生成します。

ネットワーク経由で音声をストリーミングするには、Fusion Voice Clientが必要です。プライマリ レコーダーフィールドは、ConnectionManager の下にある Recorder ゲームオブジェクトを参照します。

Photon Voice と Fusion の統合の詳細については、次のページを参照してください: Voice - フュージョン統合。

ゲームオブジェクト ConnectionManager は、MicrophoneAuthorization コンポーネントの使用により、マイクの認証を要求することもできます。マイクへのアクセスが許可されると、Recorder オブジェクトが有効になります。

サブオブジェクト Recorder は、マイクへの接続を担当します。このコンポーネントには AudioLipSyncConnector が含まれ、Recorder からオーディオストリームを受信して OVRAvatarLipSyncContext に転送します。

AudioSource と UpdateConnectionStatus は省略可能です。INetworkRunner コールバック イベントに関するオーディオ フィードバックを提供します。

ユーザーが生成したネットワークプレファブ

OnPlayerJoined コールバックが呼び出されると、ConnectionManager はユーザーネットワークプレファブ OculusAvatarNetworkRig Variant を生成します。

このプレハブには以下のものが含まれます。

MetaAvatarSync: 起動時にランダムなアバターを選択し、ネットワーク経由でアバターをストリーミングする役割を担っています。NetworkedAvatarEntity: Oculus のOvrAvatarEntityから派生したものです。ネットワークリグがローカルユーザーを表すか、リモートユーザーを表すかによって、アバターエンティティを構成するために使用されます。

アバター同期

ユーザーネットワーク接続されたプレハブが生成されるとき、MetaAvatarSyncによってランダムなアバターが選択されます。

C#

AvatarIndex = UnityEngine.Random.Range(0, 31);

Debug.Log($"Loading avatar index {AvatarIndex}");

avatarEntity.SetAvatarIndex(AvatarIndex);

AvatarIndexはネットワーク変数なので、この値が変更されるとすべてのプレイヤーが更新されます。

C#

[Networked(OnChanged = nameof(OnAvatarIndexChanged))]

int AvatarIndex { get; set; } = -1;

C#

static void OnAvatarIndexChanged(Changed<MetaAvatarSync> changed)

{

changed.Behaviour.ChangeAvatarIndex();

}

ハードウェアリグの SampleInputManager コンポーネントは、ユーザーの動きを追跡します。

プレイヤーのネットワークリグがローカルユーザーを代表している場合、NetworkedAvatarEntityによって参照されます。

この設定は MetaAvatarSync (ConfigureAsLocalAvatar()) によって行われます。

各 LateUpdate() において、MetaAvatarSync はローカルプレイヤーのアバターデータを取得します。

C#

private void LateUpdate()

{

// Local avatar has fully updated this frame and can send data to the network

if (Object.HasInputAuthority)

{

CaptureAvatarData();

}

}

CaptureLODAvatar メソッドはアバターエンティティのストリームバッファを取得し、それを AvatarData というネットワーク変数にコピーします。

容量は 1200 に制限されています。これは、Medium または High LOD の Meta アバターをストリームするのに十分な容量だからです。

なお、このサンプルでは簡略化のため、中程度のLODのアバターのみをストリーミングしています。

バッファサイズ AvatarDataCount はネットワーク上でも同期されます。

C#

[Networked(OnChanged = nameof(OnAvatarDataChanged)), Capacity(1200)]

public NetworkArray<byte> AvatarData { get; }

[Networked]

public uint AvatarDataCount { get; set; }

そこで、アバターストリームバッファが更新されると、リモートユーザーに通知され、リモートプレーヤーを表すネットワークリグ上で受信データを適用します。

C#

static void OnAvatarDataChanged(Changed<MetaAvatarSync> changed)

{

changed.Behaviour.ApplyAvatarData();

}

リップシンク

マイクの初期化は Photon Voice Recorder によって行われます。

OVRHardwareRig 上の OvrAvatarLipSyncContext は、オーディオバッファを直接呼び出すことができるように設定されています。

あるクラスがレコーダーから読み込んだ音声をフックして、 OvrAvatarLipSyncContext に転送します(詳細は後述します)。

Recorder クラスは読み込んだオーディオバッファを IProcessor インターフェースを実装したクラスに転送することができます。

カスタム オーディオ プロセッサの作成方法の詳細については、次のページを参照してください: Photon 音声 - よくある質問。

このようなプロセッサを音声接続に登録するために、Recorder と同じ gameobject に VoiceComponent のサブクラス AudioLipSyncConnector を追加しました。

これにより、PhotonVoiceCreatedとPhotonVoiceRemovedコールバックが受信され、接続された音声にポストプロセッサーを追加することができるようになりました。

接続されたポストプロセッサーは AvatarAudioProcessor で、IProcessor<float> を実装しています。

プレイヤーとの接続中、MetaAvatarSync コンポーネントは Recorder にある AudioLipSyncConnector を探し、このプロセッサの lipSyncContext フィールドをセットします。

そうすることで、Recorder から AvatarAudioProcessor Process コールバックが呼ばれるたびに、 OvrAvatarLipSyncContext に対して、受け取ったオーディオバッファで ProcessAudioSamples が呼ばれ、アバターモデル上でリップシンクロが確実に計算されるようになります。

このようにして、リップシンクは他のアバターボディ情報と一緒にストリーミングされ、アバターエンティティの RecordStreamData_AutoBuffer でキャプチャされると、MetaAvatarSync の遅延更新中に行われます。

続き

再開します。MetaAvatarSync ConfigureAsLocalAvatar() メソッドのおかげで、ローカルユーザーのためにユーザーネットワークのプレハブが生成されると、関連する NetworkAvatarEntity は .NET Framework からデータを受信し、リップシンクを行います。

- リップシンクのために

OvrAvatarLipSyncContextからデータを受け取ります。 - ボディートラッキングのための

SampleInputManager

データは、ネットワーク変数に感謝しながら、ネットワーク経由でストリーミングされます。

リモートユーザのネットワークプレファブが生成されると、MetaAvatarSync ConfigureAsRemoteAvatar() が呼ばれ、関連する NetworkAvatarEntity クラスがストリームされたデータを元にアバターを生成しアニメーション化させます。